Anatomy of an enterprise prompt: 7 elements that separate an amateur prompt from a professional one

Most prompts running in production today are designed like Google searches: a question, an answer. They work ~60-70% of the time. The other 30-40% is where the team ends up editing outputs, losing trust in AI, and eventually abandoning the project. What sets a professional enterprise prompt apart is structure. These are the 7 elements we apply in every one of the 50+ prompt engineering projects we've delivered.

- Role — which identity the model assumes (not decoration: it defines tone, vocabulary, confidence level)

- Context — specific business context the model can't infer on its own

- Task — exact action, no ambiguity, with a clear output verb

- Input variables — dynamic data that changes per user/session

- Output format — structure parseable by downstream systems

- Constraints — explicit restrictions and edge cases

- Examples — few-shot learning with real client cases

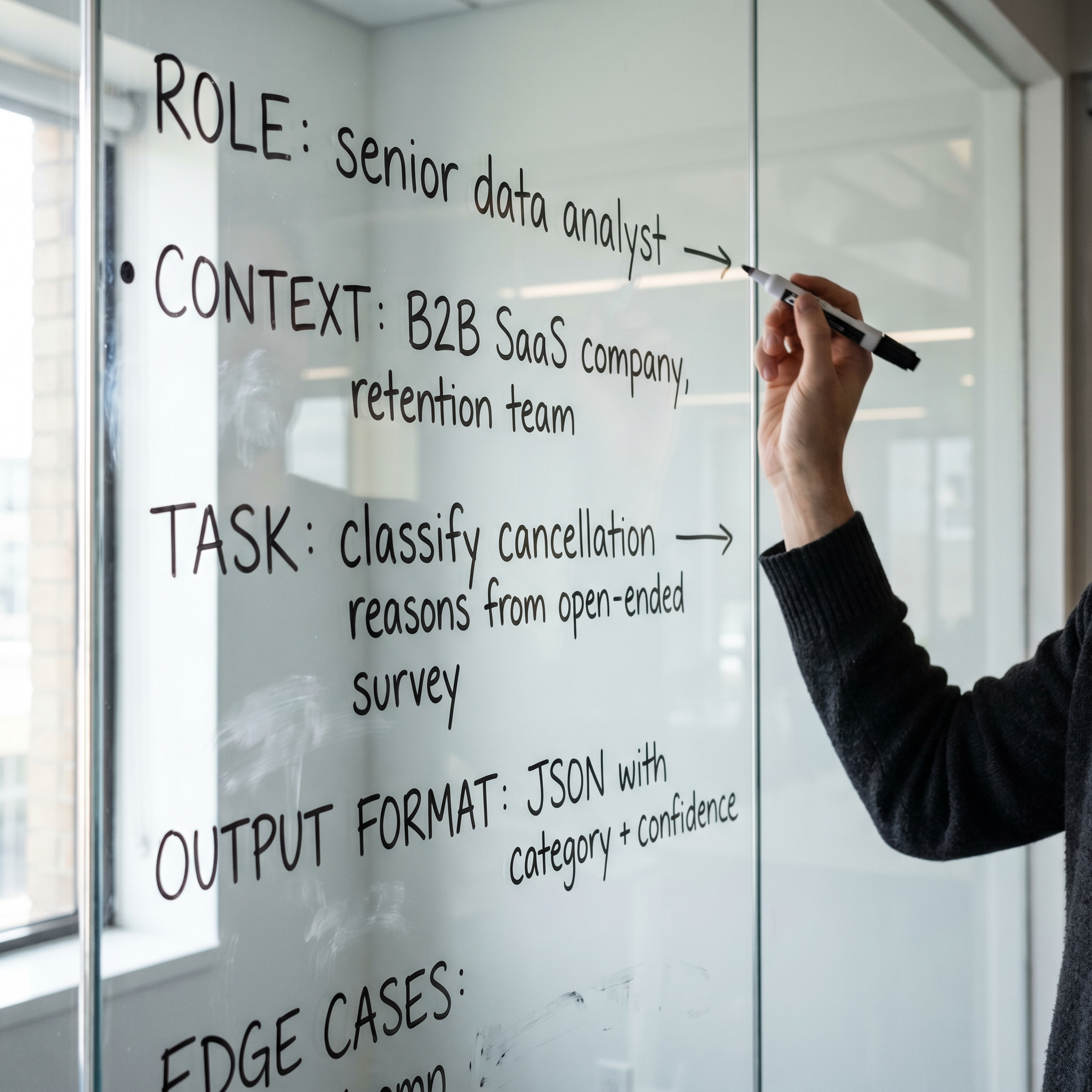

01Role

The most underrated element and the one with the biggest impact. "You are a helpful assistant" isn't a Role — it's a placeholder. A well-designed Role defines the operational identity the model must embody: profession, experience level, communication tone, knowledge domain, and stance toward uncertainty.

Amateur example: "You are an assistant that helps classify tickets."

Professional example:

The second prompt produces ~25% more consistent outputs than the first, without changing anything else in the structure. For one reason: the model models its behavior on the pattern of an expert human, not on the average statistical pattern of "helpful internet assistant."

02Context

Business context is what the model can't infer on its own, no matter how capable Claude or GPT is. It doesn't know your company serves dental clinics in Toronto, that your main product is the line of premium implants for senior patients, or that the average ticket is USD 2,800 and therefore "free consultation" inquiries are cold leads.

Well-designed context is specific, not decorative. It's not "we're an innovative market-leading company" — that doesn't help the model. It's:

With this context, the model can resolve cancellation queries with the right policy, recommend treatments in the right price range, and recognize when the client mentions the TUOTEMPO system without the team having to explain it every time.

03Task

The task is the exact action the model must execute. This is where most amateur prompts fail: vague tasks like "analyze the message and respond" or "help the customer with their inquiry." The model will do something, but not necessarily what you need.

A well-designed Task has a clear output verb and an explicit success criterion:

04Input variables

Professional prompts are templates with dynamic variables, not static text. Every time the prompt runs, it receives data from the user/session context. This multiplies its utility 10x — the same prompt serves 10,000 distinct conversations.

Without dynamic variables, the prompt is glued text. With variables, it's a program executing business logic adapted to each case.

05Output format

If the model output is going to be read by a human, plain text is enough. If it's going to be parsed by another system (CRM, database, downstream prompt), you need explicit structure: JSON, XML, YAML, or custom delimited format.

Structured format is what lets the prompt's output connect to real workflows (n8n, Chatwoot Automation, custom backend) without fragile regex or manual parsing.

06Constraints

Edge cases are where most amateur prompts fail in production. What happens if the ticket is empty? In another language? If it mentions something prohibited by compliance? If it looks like phishing? A professional prompt declares explicit restrictions and expected behavior in each case.

07Examples (few-shot)

The last element, and often the one that boosts consistency the most: include 2-5 correctly-resolved examples in the prompt. This gives the model the exact pattern to follow, much more effective than just describing it abstractly.

With 5 well-selected examples covering the most frequent cases and the most critical edge cases, the model internalizes the pattern better than with 500 words of abstract instructions.

What changes when you apply the 7 elements

In projects where we applied the 7 elements vs amateur prompts from the same clients, the operational difference is:

- Consistency: from 60-70% to 92-96% (output the team can trust without human review)

- Human review time: typical reduction of 70-80%

- Edge cases handled: from 30% to 95%+ (depends on how many cases you document)

- Cross-model portability: the same prompt works on Claude, GPT, Gemini with minimal adjustments

- Maintenance: adding a new case means modifying the prompt in 1 section, not rewriting it all

Is your company already using AI with generic prompts?

We design the 7 prompt elements with real data from your company. Free 30-minute initial diagnosis.

Chat on WhatsAppFrequently asked questions

What are the essential elements of an enterprise prompt?

The 7 essential elements are: Role, Context, Task, Input variables, Output format, Constraints, and Examples. Amateur prompts use 1-2 of these elements. Professionals use all 7 deliberately.

What's the difference between an amateur prompt and a professional one?

An amateur prompt works ~60-70% of the time and produces inconsistent outputs. A professional prompt achieves 90%+ consistency, handles explicit edge cases, has dynamic variables, structured parseable output, and maintenance documentation.

Why don't generic template prompts work in production?

Generic template prompts are designed for "any company" — so they're not designed well for any. They lack specific business context, dynamic variables, real-flow edge cases, and validation with real data.

How do you test a prompt before deploying it to production?

4 phases: 1) Test with synthetic data. 2) Test with real company data. 3) Test known edge cases. 4) A/B test with humans in the loop. Only after passing all 4 phases with consistency >90% does it ship.

How much does designing a professional enterprise prompt cost?

8-20 hours from a senior Engineer, USD 400-1,500 per prompt. Multi-prompt projects start at 25 UF + tax. Typical payback 30-90 days vs the cost of doing the task manually.